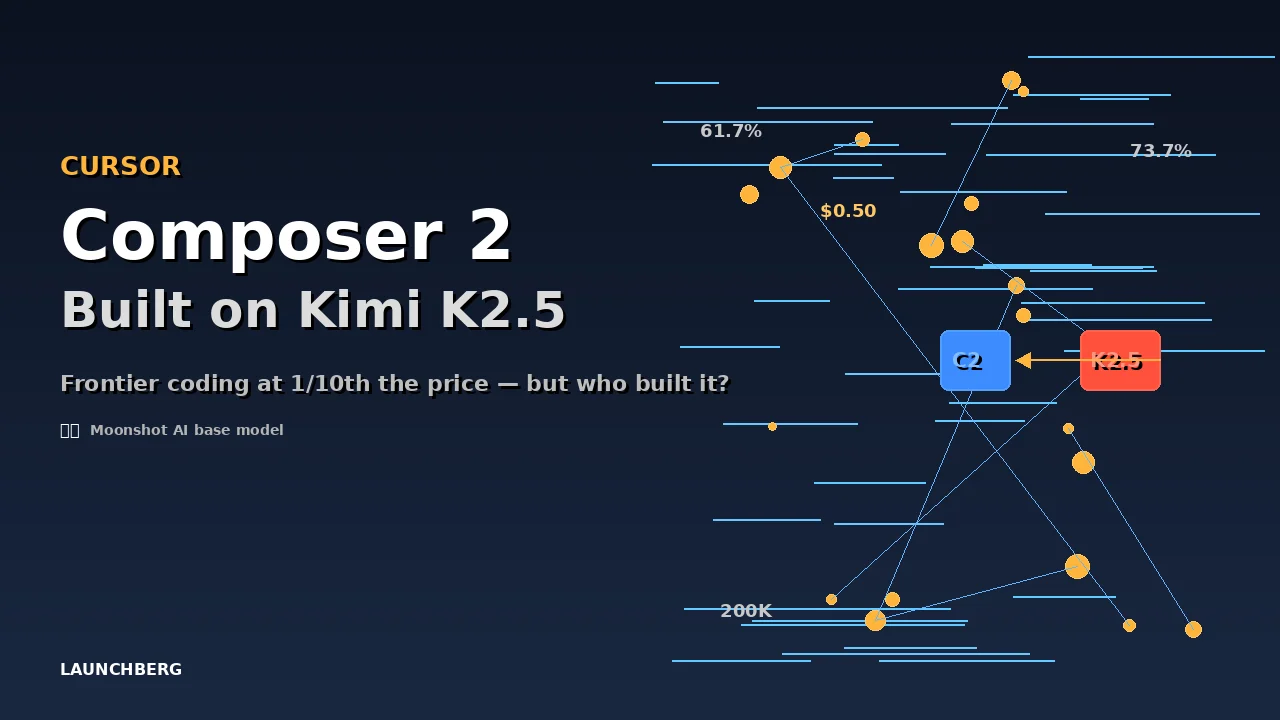

Cursor Composer 2 Is Fast, Cheap, and Built on Kimi K2.5

Cursor's new coding agent costs one-tenth the price of frontier models and scores 61.7% on Terminal-Bench. Then developers found the model ID — and it pointed straight to Moonshot AI's Kimi K2.5.

A developer poking around Cursor’s API on launch day found something the announcement blog post didn’t mention. The internal model identifier for Composer 2 read accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast — pointing directly at Kimi K2.5, a foundation model built by Beijing-based Moonshot AI.

Cursor hadn’t said a word about it.

What Composer 2 Actually Is

Strip away the controversy and the product is genuinely impressive. Composer 2 scores 61.7% on Terminal-Bench 2.0 — not bad, but it actually trails Claude Opus 4.6 at 65.4% and sits well behind OpenAI’s GPT-5.4 at 75.1%. On SWE-bench Multilingual it hits 73.7%, and CursorBench lands at 61.3%.

The pricing is the headline number for most developers: $0.50 per million input tokens and $2.50 per million output. That’s an 86% drop from Composer 1.5, which charged $3.50/$17.50. A faster variant runs at $1.50/$7.50 — still dramatically cheaper than using frontier models directly. The context window sits at 200,000 tokens.

In practice, Composer 2 is tuned for the kind of multi-step work that actually eats developer time — navigating codebases, editing files, running terminal commands, iterating toward a fix. It’s trained specifically for tool use inside Cursor’s environment, not general-purpose chat.

The Kimi K2.5 Revelation

Hours after launch, the model ID leak spread across X. Cursor’s initial response was silence. Then VP of developer education Lee Robinson acknowledged that Composer 2 “started from an open-source base,” claiming only about a quarter of the compute came from Kimi K2.5 itself — the rest from Cursor’s own fine-tuning and reinforcement learning.

Two days later, co-founder Aman Sanger was more direct: “It was our omission not to mention Kimi as the base model from the start in the blog.”

Moonshot AI’s official account confirmed the relationship was legitimate — an “authorized commercial partnership” brokered through Fireworks AI. No licensing violation. But here’s the wrinkle: Kimi K2.5’s license requires any product generating over $20 million in monthly revenue to prominently display “Kimi K2.5” in its interface. Cursor reportedly exceeds that threshold by roughly 8x.

Whether Cursor is currently in compliance — or whether the partnership agreement carves out an exception — remains unclear.

Why This Matters More Than the Benchmarks

The Composer 2 launch exposed something the AI coding tool market hasn’t had to confront yet: provenance. When you pay for a “Cursor model,” what exactly are you getting? A quarter of Kimi K2.5’s compute plus Cursor’s fine-tuning, apparently. That’s not necessarily bad — it’s how most commercial software works, building on open foundations. But the instinct to hide it is the problem.

Nvidia’s Nemotron coalition — which includes Cursor alongside Perplexity and Mistral — is built on the premise that AI companies can share infrastructure openly while competing on product. Cursor’s initial silence on Kimi K2.5 cuts against that premise. If your coding agent is built on a Chinese foundation model and you don’t disclose it until someone reverse-engineers the API, you’ve created a trust problem that no benchmark score can fix.

The competitive picture is also shifting fast. MiniMax’s M2.7 is pushing self-evolving training loops. Xiaomi’s MiMo-V2-Pro claims parity with GPT-5.2 and Opus 4.6 at the 1T-parameter scale. Cursor’s bet — that a well-tuned derivative model inside a great editor is worth more than a frontier model in a generic chat window — might be right. But “well-tuned derivative” only works as a value proposition if you’re upfront about what you’re deriving from.

At $0.50 per million input tokens, Composer 2 is the cheapest frontier-adjacent coding model you can use today. The question is whether “frontier-adjacent” needs an asterisk.