Nvidia's Nemotron Coalition: Cursor, Perplexity, Mistral

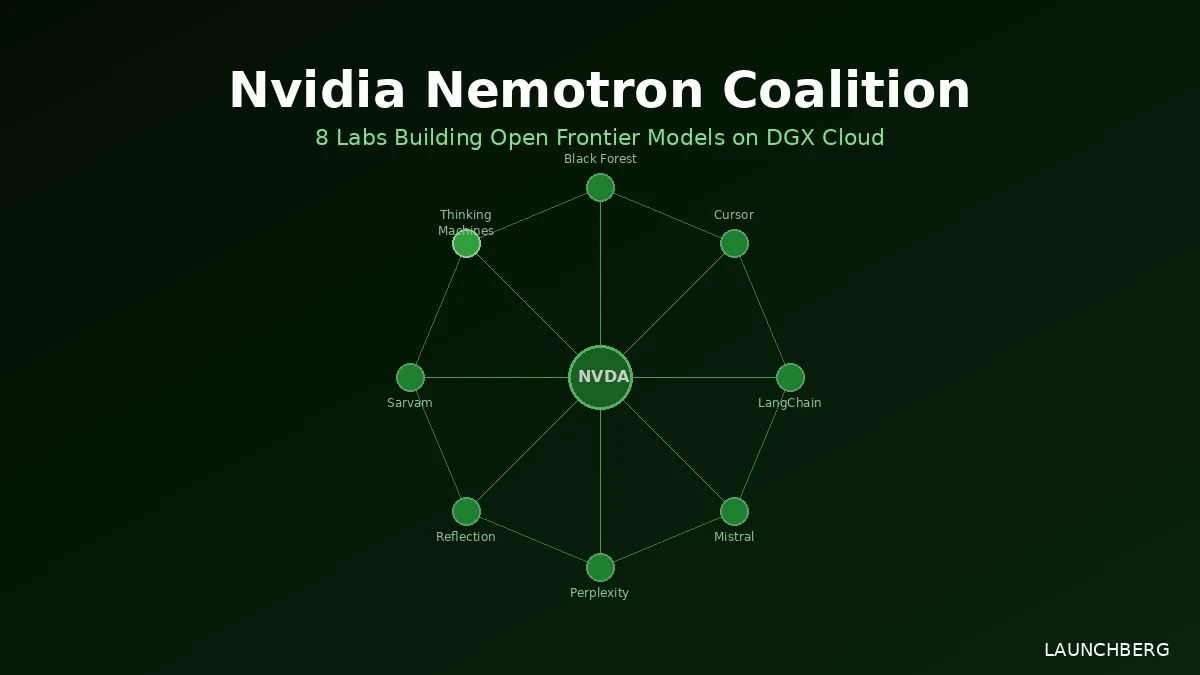

Nvidia is forming an 8-lab coalition to build open frontier models on DGX Cloud. Mira Murati's Thinking Machines Lab is a founding member.

The most interesting name on the coalition member list is Thinking Machines Lab — Mira Murati’s startup, founded after she left OpenAI. It’s the newest of the eight organizations, and the fact that Nvidia brought it in as a founding member is a signal about who Nvidia wants as credibility anchors for this effort.

Announced at GTC 2026, the Nemotron Coalition brings together eight AI labs to collaboratively build an open foundation model trained on Nvidia DGX Cloud: Black Forest Labs, Cursor, LangChain, Mistral AI, Perplexity, Reflection AI, Sarvam AI, and Thinking Machines Lab. The first concrete deliverable is a base model co-developed by Nvidia and Mistral AI, which will anchor the upcoming Nemotron 4 model family.

What the Coalition Is Actually Building

The shared goal is an open foundation model — the base on which each partner can build specialized versions for their own domains. Cursor brings evaluation data from real-world coding tasks. LangChain is focused on reliable tool use and long-horizon agentic reasoning. Perplexity brings experience optimizing for accessible, high-performing systems at query scale. Black Forest Labs contributes multimodal and visual intelligence. Sarvam AI is handling sovereign AI capabilities — voice-first, multilingual, culturally specific models. Reflection AI is focused on safety and dependability. Thinking Machines Lab’s stated mission is democratizing frontier AI access.

The resulting models will be open-sourced, meaning developers and enterprises can customize them for specific industries without paying for a closed API.

Why DGX Cloud

The infrastructure problem is what’s kept many of these labs from building at this scale independently. Multi-node training across tens of thousands of GPUs is expensive and operationally complex. DGX Cloud removes that barrier — access via browser, no capital investment in hardware. Nvidia handles the infrastructure; the partners contribute research, data, and domain expertise.

This is a meaningful advantage over trying to coordinate the same collaboration on third-party cloud. Nvidia controls the hardware layer, which means the coalition gets compute allocation that independent labs couldn’t negotiate individually.

Where Nemotron Stands Now

Nemotron 3 Super — the current generation’s mid-tier model — is already deployed in multi-agent workloads. The full Nemotron 3 family runs from Nano (3.2B active parameters) through Super (~100B total, 10B active) up to Ultra (~500B parameters, 50B active). All use a hybrid Mamba-Transformer MoE architecture with a 1 million-token context window.

Nemotron 4, built on the coalition’s jointly-developed base model, will be the next generation. Nvidia hasn’t given a timeline.

Nvidia’s Ecosystem Play

The Nemotron Coalition isn’t Nvidia’s only open model initiative. The Nemotron family sits alongside five other frontier model lines: Cosmos (world/vision models), Isaac GR00T (robotics), Alpaymayo (autonomous driving), BioNeMo (biology/chemistry), and Earth-2 (climate). The pattern is the same across all of them — Nvidia provides the compute and training infrastructure; external partners and researchers provide the domain knowledge.

The coalition model is smart positioning against Anthropic, OpenAI, and Google, all of whom build closed. Nvidia’s Vera Rubin hardware advantage means the company that builds the best open model pipeline benefits every time someone trains on Nvidia chips — which is almost everyone. Supporting open frontier models is good for GPU sales whether or not any specific model wins.

Eight labs, one base model, a roadmap for Nemotron 4. The first real test is whether the co-developed Nvidia-Mistral base model can compete with what the closed labs are shipping.