Nvidia Vera Rubin and Groq 3: The GTC 2026 Hardware Blitz

Seven chips in production, a purpose-built agentic CPU, 256-LPU inference racks, and $1 trillion in orders. Nvidia's GTC 2026 keynote rewrites the datacenter playbook.

Jensen Huang walked onstage at GTC 2026 and essentially announced seven new chips at once. Not roadmap slides. Not "coming soon." Seven chips in full production, shipping as an integrated platform called Vera Rubin. It's the most aggressive hardware launch Nvidia has ever attempted — and the inference story buried inside it is more interesting than the headlines suggest.

The Vera Rubin Platform: What's Actually In It

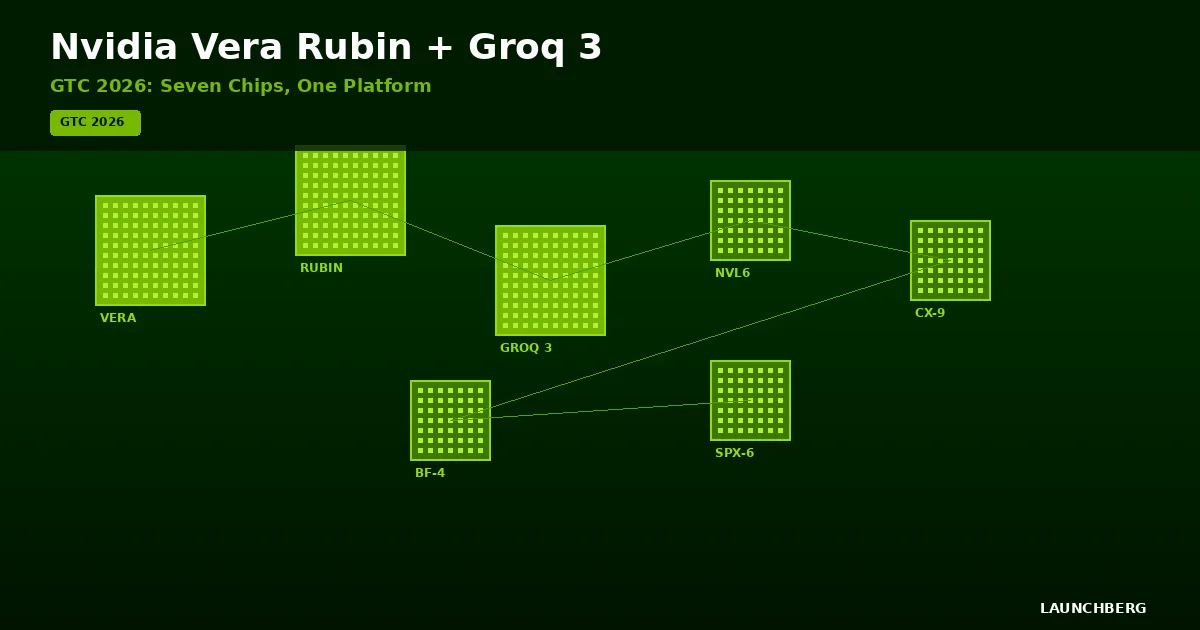

Vera Rubin isn't a single chip. It's an ecosystem of seven: the Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, Spectrum-6 Ethernet switch with co-packaged optics, and the newly integrated Groq 3 LPU. All seven ship together. All seven are designed to function as a unified system.

The Vera CPU is the most strategically important piece. An 88-core custom Arm processor with 227 billion transistors, it supports 1.5 TB of LPDDR5X memory — triple the previous Grace CPU — with 1.2 TB/s bandwidth. Nvidia's new MGX rack reference design packs 256 Vera CPUs into a single liquid-cooled rack: 22,500 cores, 400 TB of memory. That's a purpose-built CPU for agentic AI workloads, and it's the clearest signal yet that Nvidia sees inference and agent orchestration as the next volume market.

The Rubin GPU runs on TSMC's 3nm process with 336 billion transistors across two reticle-sized dies. Each GPU delivers 50 PFLOPS of FP4 inference — 5x Blackwell — and carries 288 GB of HBM4 with 22 TB/s memory bandwidth. That memory bandwidth number is 2.8x what Blackwell offered, and it matters more than raw FLOPS for large language model workloads that are increasingly memory-bound.

The NVL72 Rack

The system-level configuration is the Vera Rubin NVL72: 72 Rubin GPUs, 36 Vera CPUs, 9 NVLink switch trays, roughly 1,300 chips and 1.3 million components in a single rack. The numbers are staggering — 3.6 exaflops of FP4 inference, 20.7 TB of HBM4, 260 TB/s scale-up bandwidth. Liquid-cooled, shipping second half of 2026.

For context: a single NVL72 rack delivers more inference compute than entire datacenters managed just three years ago.

Groq 3: The Inference Play

Here's where GTC 2026 gets interesting. Nvidia didn't just announce faster GPUs — it shipped a dedicated inference processor. The Groq 3 LPU packs 500 MB of SRAM per chip with 150 TB/s bandwidth and 1.2 PFLOPS of FP8 compute. The design philosophy is radically different from GPUs: store entire model layers in fast SRAM, eliminate DRAM access latency, generate tokens at thousands per second.

The Groq 3 LPX rack stacks 256 of these LPUs with 128 GB of combined SRAM and 40 PB/s aggregate bandwidth. Nvidia claims 35x higher inference throughput per megawatt and 10x more revenue opportunity for trillion-parameter models compared to Blackwell. Those are extraordinary claims — but the architecture is genuinely novel. SRAM-first inference at this scale hasn't been attempted before.

Huang called the Groq integration a "Mellanox moment" — referencing Nvidia's $6.9 billion acquisition of Mellanox in 2019, which gave them end-to-end networking. The Groq acquisition (reportedly $20 billion) does the same for inference. Nvidia is no longer a GPU company. It's an AI platform company, and Vera Rubin is the proof.

Performance vs. Blackwell

| Metric | Blackwell GB200 | Rubin | Improvement |

|---|---|---|---|

| FP4 Inference (per GPU) | 10 PFLOPS | 50 PFLOPS | 5x |

| Training Performance | 10 PFLOPS | 35 PFLOPS | 3.5x |

| HBM Capacity | 192 GB HBM3e | 288 GB HBM4 | 1.5x |

| Memory Bandwidth | 8 TB/s | 22 TB/s | 2.8x |

| Cost per Token (inference) | Baseline | 10x lower | 10x |

The 10x cost-per-token reduction is measured on Kimi-K2-Thinking (32K input / 8K output) with deep reasoning workloads. Real-world results will vary, but even a 3-4x improvement would reshape inference economics.

The Roadmap: Rubin Ultra and Feynman

Rubin Ultra arrives second half of 2027 with four reticle-sized dies, 100 PFLOPS of FP4, 1 TB of HBM4e memory, and 32 TB/s bandwidth per GPU. An NVL576 configuration would deliver 15 exaflops of inference. After that: Feynman in 2028, built on TSMC's 1.6nm process with silicon photonics — optical signal transmission replacing electrical. Huang described it as "inference-first" architecture.

The yearly cadence is relentless. Blackwell (2024) → Rubin (2026) → Rubin Ultra (2027) → Feynman (2028). Each generation targeting a specific bottleneck: training compute, then memory bandwidth, then inference latency, then power efficiency.

$1 Trillion Through 2027

Huang dropped the headline number casually: at least $1 trillion in purchase orders for Blackwell and Vera Rubin systems through 2027. That's double the $500 billion estimate from 2025. He attributed the surge to a shift from training to massive-scale inference — agentic AI loops generating exponentially more tokens than static chat.

Thinking Machines Lab announced a multiyear partnership to deploy at least 1 gigawatt of Vera Rubin systems for frontier model training. A gigawatt. That's utility-grade power for a single customer.

What This Means

GTC 2026 confirms something the industry has been circling: the AI hardware market is bifurcating. Training needs raw FLOPS. Inference needs memory bandwidth, low latency, and energy efficiency. Nvidia's Nemotron models optimize for the software side. Vera Rubin optimizes for the hardware side. And with Groq 3, Nvidia now owns both lanes — GPU training and LPU inference — in a single platform.

The competitive question isn't whether AMD or Intel can match individual chip specs. It's whether anyone can match the system. Seven chips, designed together, shipping together, with NVLink fabric connecting everything. NemoClaw and OpenClaw handle the software layer. Nvidia isn't selling chips anymore. It's selling AI factories.