OpenAI GPT-5.4 Mini and Nano: Built for Agents

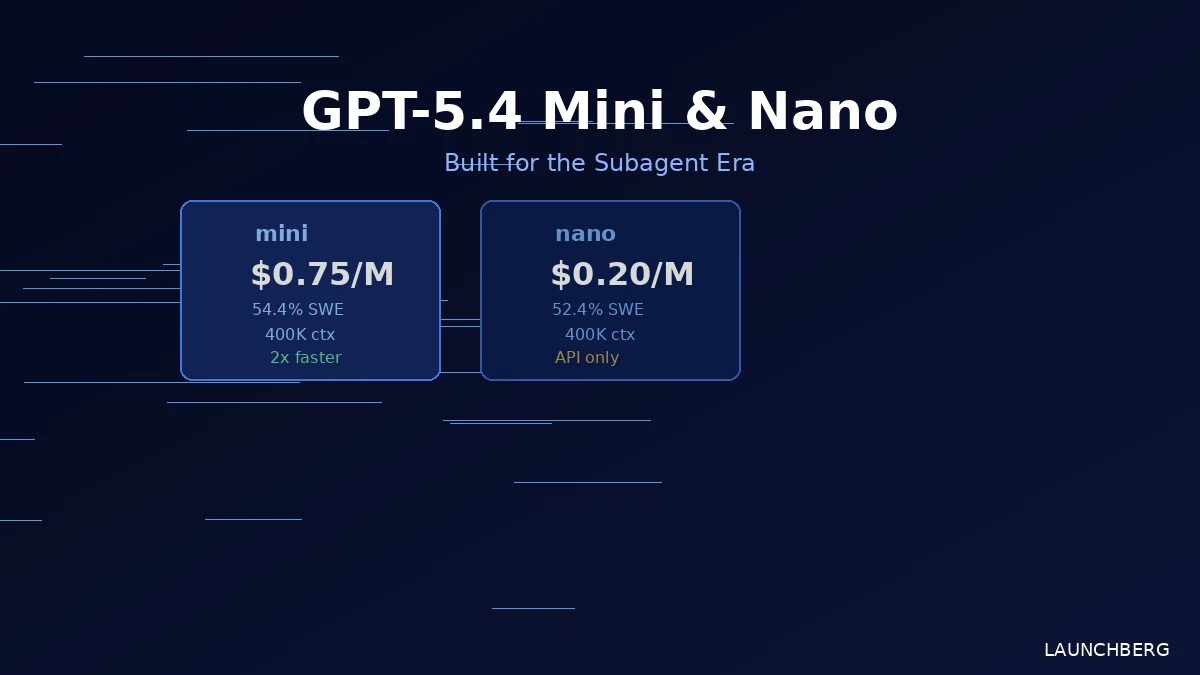

GPT-5.4 mini hits 54.4% on SWE-Bench at $0.75/M tokens. Nano goes cheaper still. Both unlock agentic tool calling at the small-model tier.

The interesting thing about GPT-5.4 mini and nano isn’t that they’re smaller versions of the flagship. It’s what they’re designed to do inside a larger system.

OpenAI is explicitly positioning these as subagent infrastructure — the fast, cheap workers that a coordinating model dispatches to handle parallel tasks while the flagship stays focused on planning. That framing matters, because it changes how developers should think about deploying them.

What You’re Actually Getting

GPT-5.4 mini lands at $0.75 per million input tokens and $4.50 per million output. It has a 400,000-token context window, runs more than twice as fast as GPT-5 mini, and scores 54.4% on SWE-Bench Pro — within 3 points of the full GPT-5.4 (57.7%). On OSWorld-Verified, which tests computer use tasks, it hits 72.13% versus the flagship’s 75.03%.

GPT-5.4 nano goes further: $0.20 input, $1.25 output, same 400K context. It scores 52.4% on SWE-Bench Pro — surprisingly close to mini — but drops to 39% on OSWorld, which suggests computer use tasks are where the capability gap actually shows up. Nano is API-only; it won’t appear in ChatGPT.

Both models support text and image inputs, tool calling, function calling, web search, file search, and computer use.

The Subagent Pitch

OpenAI’s marketing language here — “subagent era,” “parallel grunt work,” “fast iteration” — isn’t accidental. The pitch is that you don’t route everything through an expensive flagship. Instead, the flagship plans; mini and nano execute. One model searches a codebase, another reviews a file, a third generates a test, all in parallel, all at mini/nano prices.

That architecture is more interesting for developers building agent systems than the raw benchmark numbers. Hebbia’s CTO noted that mini matches or outperforms competitive models on output quality and citation recall while costing less. Notion’s AI engineering team called it better than GPT-5.2 on focused editing tasks at a fraction of the compute.

The catch is the pricing jump. GPT-5 mini ran at $0.25 input / $2.00 output. GPT-5.4 mini is $0.75 / $4.50 — 3x more expensive on the input side. That’s a meaningful increase for high-volume applications. Nano is cheaper in absolute terms, but it’s also 4x more expensive than original nano. OpenAI’s GPT-5.3 Instant already showed the pattern: performance gains come with a price adjustment.

| Model | Input ($/M) | Output ($/M) | Context | SWE-Bench Pro | Notes |

|---|---|---|---|---|---|

| GPT-5.4 Nano | $0.20 | $1.25 | 400K | 52.4% | API-only |

| GPT-5.4 Mini | $0.75 | $4.50 | 400K | 54.4% | ChatGPT + API |

| GPT-5.4 (Full) | — | — | 400K | 57.7% | Flagship |

| GPT-5 Mini (prev.) | $0.25 | $2.00 | — | 45.7% | Previous gen |

What Mini Unlocks

The capability that matters most isn’t benchmark scores — it’s reliable agentic tool calling. That was previously a premium-model feature. Moving it into the mini tier opens up customizable in-app agents that don’t require routing to a flagship model for every tool invocation.

That’s a real architectural shift. Applications that needed GPT-5.4 for reliable tool use can now run a significant chunk of that work through mini, cutting costs while getting something close to flagship performance on coding and computer use tasks.

For nano, the use cases are narrower but clear: classification, extraction, ranking, triage, guardrail checks. Tasks where you need a fast, cheap model to process lots of data and you don’t need it to reason deeply.

Both models are available in the API now. Mini is also in ChatGPT for Free and Go users. If you’re building GPT-5.4-based agent pipelines, the mini tier is where the economics start to work. And for anyone benchmarking against Claude Code’s agentic coding workflows, the SWE-Bench numbers put mini in genuinely competitive territory.