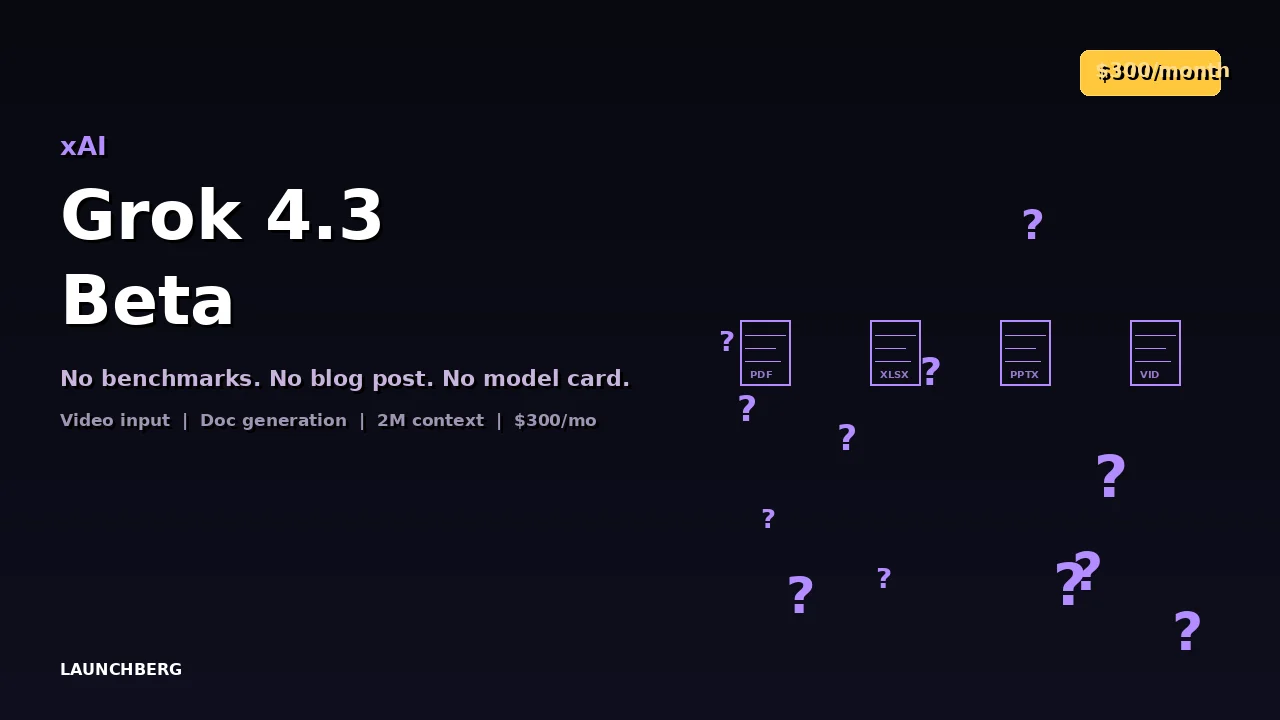

Grok 4.3 Beta: $300/Month, No Benchmarks, No Blog Post

xAI quietly drops Grok 4.3 with native video input, document generation, and a 2M token context window — but only for SuperGrok Heavy subscribers at $300/month. No model card, no benchmarks, no announcement.

xAI released Grok 4.3 beta on April 17, 2026, with no blog post, no model card, no third-party benchmarks, and no tier-1 outlet coverage. You find out about it by opening grok.com and noticing the model selector changed.

This is becoming a pattern. xAI ships models the way some companies ship hotfixes — quietly, frequently, with documentation arriving weeks later if at all. For a $300/month product, the lack of transparency is remarkable.

What’s New

Grok 4.3 adds native video input — feed it a video clip and it can reason about what it sees, conversationally. This puts it in the same multimodal tier as GPT-5.4 and Gemini 3.1 Pro, though without published benchmarks there’s no way to compare quality.

The model can now generate downloadable PDFs, populated spreadsheets, and PowerPoint decks directly from conversation. Early testers report the formatted output is genuinely usable — not a gimmick but actual documents you could hand to someone. This is a capability Anthropic and OpenAI haven’t matched in their chat interfaces.

The 2 million token context window carries over from Grok 4.20, still the largest among Western closed models. The 16-agent Heavy system — where multiple Grok instances collaborate on complex tasks — is also retained.

What We Don’t Know

Early reports suggest roughly 0.5 trillion parameters with a 1T checkpoint still training. Enhanced reasoning depth is attributed to longer training runs. But xAI hasn’t published anything official — no model card, no benchmark tables, no training methodology.

That’s a problem. At $300/month for SuperGrok Heavy (the only tier that gets 4.3), this is the most expensive consumer AI subscription by a wide margin. Claude Pro and ChatGPT Plus run $20/month. Paying 15x more without seeing a single benchmark is a trust exercise that xAI hasn’t earned through transparency.

The Missing Feature

No persistent memory between sessions. ChatGPT has had it for over a year. Claude has had it since late 2025. At this price point, the absence is hard to defend — every conversation starts from zero, which undermines the value of a model that’s supposed to be your premium AI assistant.

The Pattern

Grok’s release cadence is aggressive. Version 4.20 shipped in March. 4.3 arrives in April. Each iteration adds capabilities, but the documentation and benchmarking lag behind by weeks. For developers trying to evaluate whether Grok belongs in their stack, this makes the model essentially unbenchmarkable at launch.

If xAI published a model card alongside the release, the conversation would be about capabilities. Instead, it’s about process. That’s a self-inflicted wound for a model that — based on early user reports — might actually be very good.