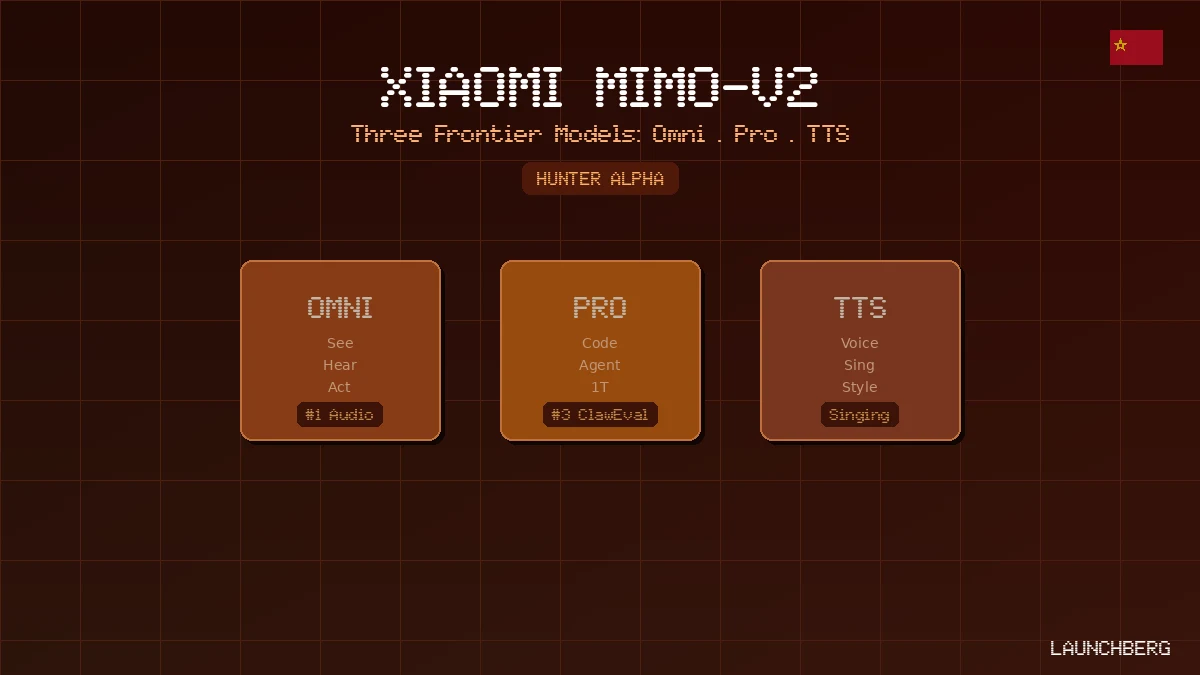

Xiaomi MiMo-V2: Three Models That See, Code, and Sing

Xiaomi drops three frontier models at once — an omni-modal agent, a 1T-parameter coding model that topped OpenRouter under a fake name, and a TTS model that can sing. All at a fraction of Claude's price.

A week before this announcement, an anonymous model called “Hunter Alpha” appeared on OpenRouter. It topped the daily usage charts for multiple days and blew past 1 trillion tokens of total API calls. Developers were posting about it as a sleeper hit — great at coding, unusually good at agentic tasks, priced below everything comparable.

Hunter Alpha was an early build of MiMo-V2-Pro. Xiaomi just revealed it alongside two siblings: MiMo-V2-Omni (omni-modal perception and agentic capability) and MiMo-V2-TTS (voice synthesis with natural language style control and singing). Three frontier models, released simultaneously, from a company most people still associate with smartphones.

MiMo-V2-Pro: The Coding and Agent Model

The numbers first. MiMo-V2-Pro has over 1 trillion total parameters with 42B active — roughly 3x the size of MiMo-V2-Flash. It uses a Hybrid Attention mechanism (7:1 ratio), supports a 1 million-token context window, and ranks #8 worldwide and #2 among Chinese LLMs on the Artificial Analysis Intelligence Index.

On benchmarks that matter for coding and agent work: SWE-bench Verified hits 78%, SWE-bench Multilingual lands at 57.1% (beating GPT-5.2’s 54%), and ClawEval — the OpenClaw standard for real-world agent evaluation — reaches 61.5%, which is #3 globally and closing on Claude Opus 4.6’s 66.3%. PinchBench average is 81.0, also #3 globally.

The pricing is where it gets aggressive:

| Model | Input ($/M) | Output ($/M) | Cache Read | Context |

|---|---|---|---|---|

| MiMo-V2-Pro (≤256K) | $1.00 | $3.00 | $0.20 | 1M |

| MiMo-V2-Pro (256K–1M) | $2.00 | $6.00 | $0.40 | 1M |

| Claude Sonnet 4.6 | $3.00 | $15.00 | $0.30 | 200K |

| Claude Opus 4.6 | $5.00 | $25.00 | $0.50 | 200K |

That’s 3x cheaper than Sonnet on input, 5x cheaper on output, with 5x more context. Cache writes are temporarily free. The API is live now at platform.xiaomimimo.com.

Xiaomi is partnering with five agent development frameworks — OpenClaw, OpenCode, KiloCode, Blackbox, and Cline — offering one week of free API access for developers worldwide.

MiMo-V2-Omni: One Model for Everything

MiMo-V2-Omni fuses dedicated image, video, and audio encoders into a single backbone. Not separate models stitched together — a unified perceptual stream that processes all modalities simultaneously.

The benchmark positioning against closed-source frontier models is surprisingly competitive. On audio understanding (MMAU-Pro), it scores 69.4% — beating Gemini 3 Pro’s 65.0%. On MMMU-Pro (multimodal reasoning), it hits 76.8%, exceeding Claude Opus 4.6’s 73.9%. On video understanding (Video-MME), it reaches 85.3%. It supports over 10 hours of continuous audio input natively — to Xiaomi’s knowledge, the first omni model operating at that scale without degradation.

The agentic demonstrations are what make the pitch concrete. Xiaomi fed raw dashcam footage and asked the model to act as the visual brain of an autonomous driving system. It produced a timestamped risk assessment identifying pedestrians, blind corners, lane narrowing, jaywalkers, and construction zones — all from a single video pass. In another demo, it controlled a browser end-to-end: researching product recommendations on Xiaohongshu, switching to JD.com to compare prices, negotiating with customer service via chat, and completing checkout. No human intervention.

MiMo-V2-TTS: Voice That Understands Context

The TTS model is the one that’ll surprise people most. Pretrained on over 100 million hours of speech data and refined with multi-dimensional reinforcement learning, it replaces the usual dropdown menu of emotions (happy, sad, angry) with a text box. Describe the voice you want in natural language — “sleepy, just woke up, slightly hoarse” or “angry but trying to stay calm” — and the model generates speech that matches.

It handles paralinguistic events naturally: coughs, sighs, hesitation fillers, sharp intakes of breath, nervous laughter. These aren’t audio clips spliced in — the model generates them as integrated components of the speech output, placed where they contextually belong.

The singing capability is worth highlighting separately. MiMo-V2-TTS synthesizes singing voice within the same unified model — no separate model, no mode switching. The same architecture that whispers a confession can belt out a pop chorus. Xiaomi claims it’s the only commercially available TTS API that natively supports both speaking and singing generation.

It also supports Chinese dialects (Northeastern Mandarin, Sichuan, Cantonese, Taiwanese) and character voices (Sun Wukong, Lin Daiyu) via the same natural language interface. The rich text understanding is particularly sharp — ALL CAPS maps to emphasis, character repetition maps to speech rhythm, punctuation shapes intonation contours.

The OpenClaw Ecosystem Play

All three models plug into OpenClaw, the open-source agent framework that’s emerging as a standard across multiple Chinese and international AI labs. MiMo-V2-Pro serves as the reasoning brain, MiMo-V2-Omni handles perception, and MiMo-V2-TTS provides the voice layer. The stack is designed to work together — and to work with anyone else building on OpenClaw.

What This Means

A year ago, Xiaomi’s AI ambitions looked like a feature play for its phone ecosystem. MiMo-V2 changes that framing. The Pro model genuinely competes with Claude Sonnet and GPT-5.2 on coding and agent tasks at a third of the price. The Omni model beats Gemini 3 Pro on audio understanding and matches it on video. The TTS model does things no commercial competitor currently offers.

Z.ai’s GLM-5-Turbo already showed that Chinese labs can compete aggressively on price while matching frontier quality. Alibaba’s Wukong is building the enterprise agent layer. Xiaomi is going after the full stack — perception, reasoning, voice — all at once.

The Hunter Alpha stunt was smart marketing, but the substance behind it is real. Three models, all live, all priced to undercut. The question for Western labs isn’t whether to take this seriously — it’s how quickly the price pressure reaches their enterprise customers.