Z.ai Ships GLM-5-Turbo for Agentic AI Workloads

Zhipu AI's closed-source GLM-5-Turbo targets the OpenClaw agent ecosystem with 744B parameters, 200K context, and pricing that undercuts Claude by 5x. Shares jumped 16%.

The Chinese frontier model market has a new entry optimized for a specific job: running AI agents. Z.ai — formerly Zhipu AI, the Tsinghua University spinoff that went public in Hong Kong in January — launched GLM-5-Turbo on March 16. It's a closed-source variant of their open-weight GLM-5, tuned specifically for agent-driven workflows and OpenClaw-style tasks. Shares jumped 16% to HK$615 on the announcement.

The positioning is deliberate. While Alibaba's Qwen and DeepSeek compete on general-purpose reasoning, Z.ai is betting that agentic AI needs a model built specifically for it — not a chat model repurposed with a system prompt.

What Makes It "Agentic"

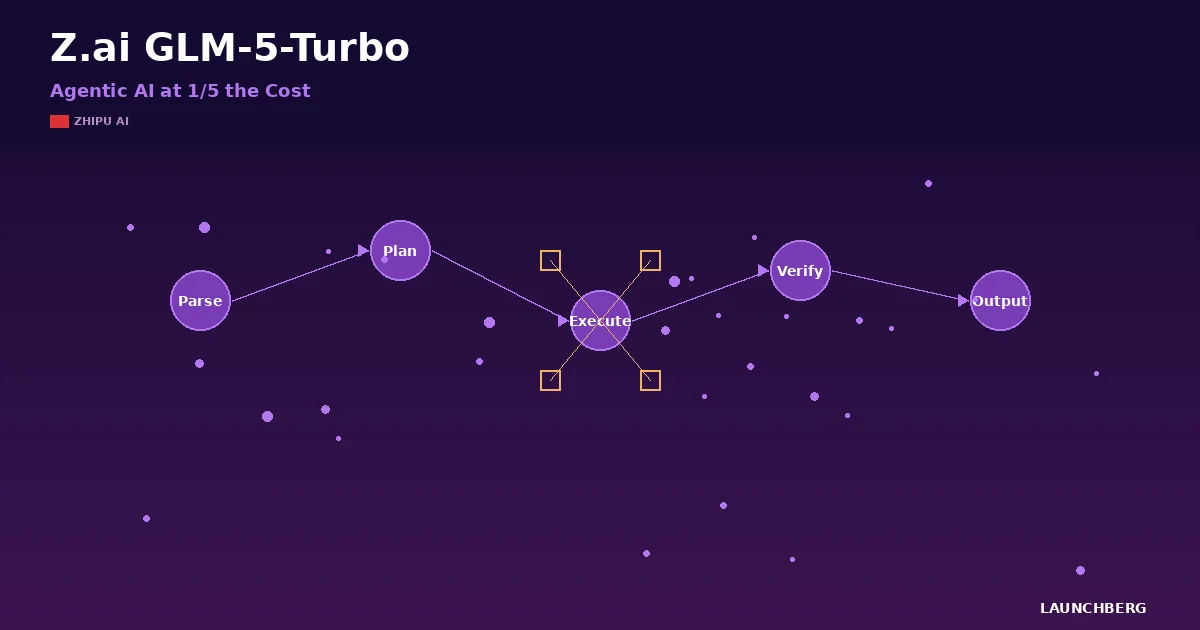

GLM-5-Turbo optimizes for the things agents actually need: long-chain execution stability across multi-step tasks, precise tool invocation, complex instruction decomposition, and self-correction when things go wrong. The base GLM-5 is a 744B-parameter MoE model with 40B active parameters. The Turbo variant keeps that architecture but tunes for speed and reliability in extended autonomous workflows.

The 200K+ context window (202,752 tokens, specifically) with 131K maximum output supports the kind of persistent, stateful execution that agentic systems demand. You need long context not for reading documents — you need it because agents accumulate state. Tool outputs, intermediate results, error logs, retry histories. A 32K window runs out fast when an agent is ten steps into a file refactoring task.

Z.ai trained the model with specialized reinforcement learning — asynchronous RL infrastructure that decouples generation from training, plus dedicated agent RL algorithms for long-horizon interactions. The result: 62.0 on BrowseComp and 56.2 on Terminal-Bench 2.0, which are agent-specific benchmarks testing real-world tool use and command-line task completion.

The Numbers That Matter

| Spec | GLM-5-Turbo | GLM-5 (base) |

|---|---|---|

| Parameters | 744B total / 40B active | Same |

| Architecture | MoE + DeepSeek Sparse Attention | Same |

| Context Window | 202,752 tokens | 202,752 tokens |

| Max Output | 131,072 tokens | 131,072 tokens |

| License | Closed-source (API only) | MIT (open-source) |

| Pricing (input) | $0.96/M tokens | Self-hosted |

| Pricing (output) | $3.20/M tokens | Self-hosted |

| Training Hardware | Huawei Ascend 910B | Same |

The pricing is the attention-getter. At $0.96 per million input tokens and $3.20 per million output tokens, GLM-5-Turbo costs roughly one-fifth what Claude Opus charges. For agentic workloads that burn through tokens — agents generate far more tokens than human conversations — that cost differential compounds fast.

Where It Sits in the Chinese AI Landscape

The base GLM-5 ranks fifth on Artificial Analysis's intelligence leaderboard with a score of 50, behind Gemini 3.1 Pro (57), GPT-5.4 (57), Claude Opus 4.6 (53), and Kimi K2.5 (47). On coding specifically — the most relevant benchmark for agent tasks — GLM-5 hits 77.8% on SWE-bench Verified, which is competitive with anything in the market. The model was trained on 28.5 trillion tokens using Huawei's Ascend 910B chips, not Nvidia hardware.

Against other Chinese models: Qwen 3.5 Plus offers a 1M context window versus GLM-5's 200K, but Z.ai argues that raw context length matters less than agent-specific tuning. DeepSeek V3 remains strong on math and reasoning. Kimi K2.5 from Moonshot AI scores higher on general benchmarks. But none of them have optimized specifically for the OpenClaw agent framework the way GLM-5-Turbo has.

The OpenClaw Bet

GLM-5-Turbo's real strategy isn't the model itself — it's the ecosystem play. OpenClaw is becoming the dominant open-source framework for persistent AI agents (Nvidia describes it as the fastest-growing open-source project in history). Z.ai wants GLM-5-Turbo to be the default model powering those agents. The model is already integrated into Claude Code, Kilo Code, Cline, OpenCode, and Clawdbot.

If that sounds like the "picks and shovels" of the agentic era — cheap, reliable inference optimized for the specific framework everyone's building on — that's exactly what it is. The closed-source Turbo variant exists because enterprises want managed inference with SLAs, not self-hosted open weights. Z.ai keeps the base GLM-5 open (MIT license) for researchers and hobbyists, while monetizing the agent-optimized version through its API and OpenRouter.

Should You Care?

If you're building agents and paying per token, GLM-5-Turbo's price point demands a benchmark test. The 5x cost advantage over Claude Opus is real, and the agent-specific tuning addresses the actual failure modes that plague agentic systems: dropped tool calls, instruction drift over long chains, inability to recover from errors.

The limitation is availability. Z.ai's API, OpenRouter, and a handful of coding tools — that's the current distribution. No AWS Bedrock. No Azure. No Google Cloud Vertex. For teams already deployed on Western cloud infrastructure, the integration story adds friction that partially offsets the cost savings.

Z.ai went public two months ago. GLM-5-Turbo is their first major post-IPO product bet. A 16% stock pop suggests the market thinks the agentic AI niche is worth owning — even in a field crowded with generalist models from Google, OpenAI, and Anthropic.